So for those of you following me on Facebook, I’ve lately been working on a programming project that takes photographs and turns them into impressionist paintings. I have named this program DEGAS, which stands for Digitally Extrapolated Graphics via Algorithmic Strokes. (I’ve always loved a good name-cronym, especially when it’s a pun. 🙂 )

It’s not done with neural networks, like Ostagram.ru does. Although neural networks are all the rage right now, they only mimic the features and product of a painting, not the process.

It’s also not a filter – that is to say that the process is not fully automated. You don’t just choose a photograph and it works on it alone and then *boom* you have a painting. You have to “paint” the details onto the photo by choosing your brushes, size, angle range, and scatter, and DEGAS automates color selection and brush orientation based upon what fits the color contours the best.

In essence, it’s an impressionist gattling-brush. The choice of what features to emphasize and their final form are still more or less in the hands of the painter, but the most time consuming aspects are automated.

It’s written in plain, vanilla HTML5/Canvas. No special libraries (although I tinkered with gpu.js for a few operations that didn’t pan out). And the currently used portion of the codebase comes in at under 1,000 lines. Once I work out a few bugs, and design a nicer UI for it, I’ll probably release the source code for anyone who wishes to play with it.

The Process

So, how does it work? First it creates several canvases:

- The Display canvas.

- A Source canvas to hold the initial image.

- A Blur Buffer canvas which holds a copy of the initial image, but blurred to a certain value (more on that in a bit).

- A general Buffer canvas for compositing things on the fly.

- A Brush canvas to hold the brush’s shape; and

- A Brush Buffer canvas where color matching takes place.

The source image is loaded in to the Source, Blur Buffer, and Display canvases. The Display canvas is appended to the body of the document, and the Blur canvas is blurred by a few pixels to smooth out rough details.

The initial brush shape is then loaded into the Brush canvas. All a brush is is an outline with a transparent background. Generally it also has antialiased edges and some alpha for thin paint. (Brushes are generally 512×512.)

Where there are several ways to add strokes to the painting, when each stroke is placed it follows the same procedure:

- First, it grabs the color of the pixel at the center of the stroke.

- It tints the Brush canvas to this color. All detail on the brush shape is lost, but all transparency is preserved.

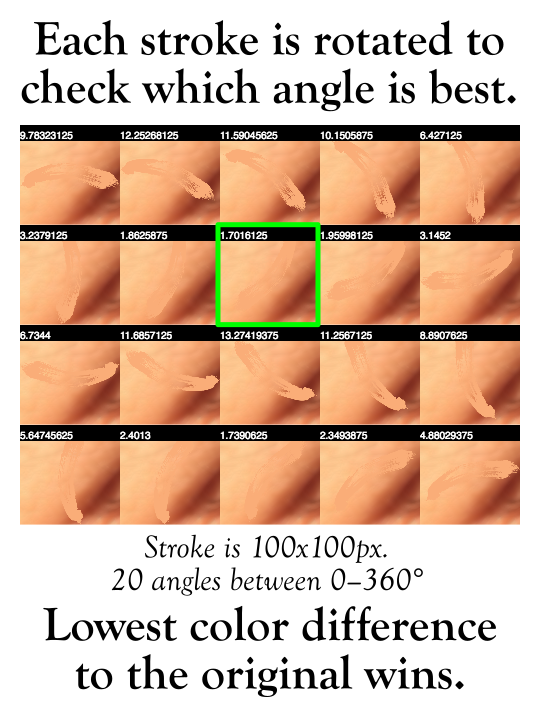

- It then reads out what allowable angles it has to paint strokes. By default it has 16 equal angles between 0 and 180 degrees. I’ve had even better results with 20 angles all 360.

- For each allowable angle:

- It reaches into the Blur Buffer and takes the area of the image that the brush will be painted on and writes it to the Brush Buffer.

- It then paints the brush at the appropriate angle on top of the Brush Buffer.

- It then runs a comparison metric on the color difference between the original area on the Blur Buffer and the painted stroke over the same area on the Brush Buffer.

- It then paints the brush onto the Display Canvas in the orientation that fits the color and contour of the original image the best.

And it does this dozens of times per second to a very stunning effect.

Some of the parameters that can be changed to affect the spread of the strokes include:

- Averaging the color of the stroke, or increasing the saturation, etc.

- The blurriness of the Blur Buffer. This helps make better stroke choices when working with noisy images, or features like hair.

- The shape of the brush – I have 9 shapes made from actual scanned brush strokes.

- The size and scatter of the strokes under the mouse. One generally starts out with a large size and scatter (300,300) for large features, and then fills in the details with smaller strokes with less scatter (150,100 to 50,40).

- What angles are allowable. The more angles, the slower the process as DEGAS needs to compare each iteration, but at the same time the better the match. Or if you want to force the brush in a certain direction you can do that, too.

DEGAS also keeps track of each stroke. As a result, it can re-paint an image while loading a painting back into memory; which looks like this:

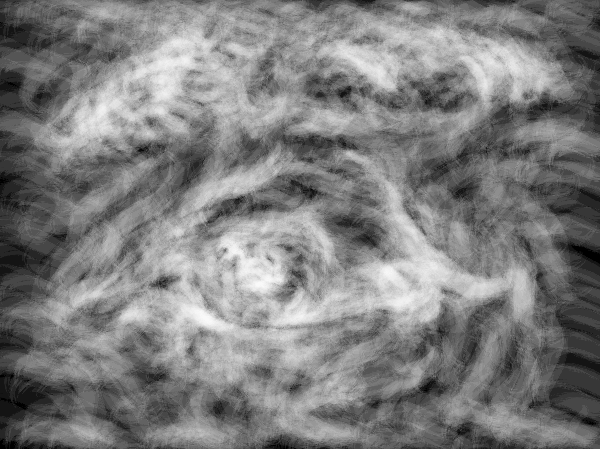

And because it keeps track of all of those strokes, it can also do some really fun post-processing. It can generate a height map of the paint:

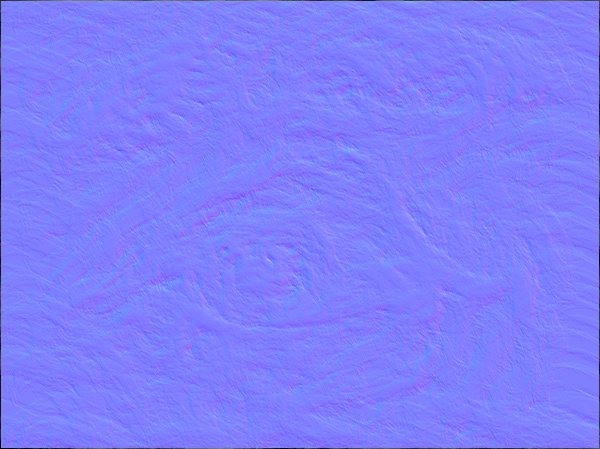

And from that height map, generate a normal map:

And then from that normal map, add the depth of the paint by simulating light casting onto the strokes:

And it looks pretty neat:

One could potentially also use the height map to “3D print” one of these paintings onto a canvas using something like a UV ink printer (which is precisely what they did for The Next Rembrandt project).

I’ll be posting more images here soon.

UPDATE (March 29, 2017): I’ve skipped the normal map step and have gone and implemented GIMPs bump map algorithm (which computes the normals much better and shades them directly without sticking them in a normal map file first). Here’s a new video showing it in action. You may have to view it full screen as the effect of the brush strokes at this resolution is a bit subtle.

FURTHER UPDATES (Oct 16): DEGAS is now officially an “award winning algorithm.” It came in 1st place in the Graphics category at Arts in the Park (I should post some pictures). I also have a gallery of some of my work up here.

Peace,

-Steve